The Case for Agentic Tech

A pattern language for a new tech category

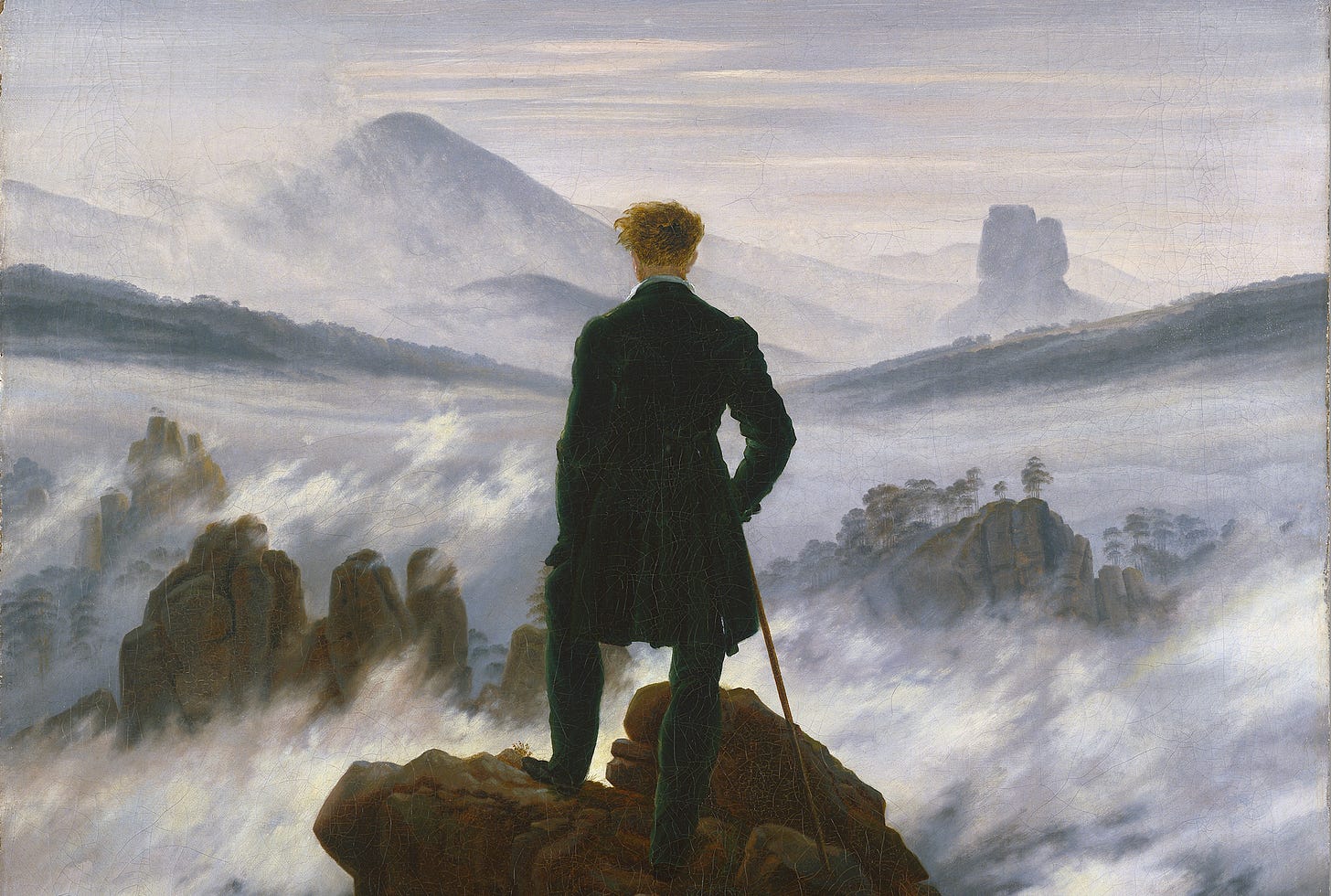

Credit: Wanderer Above the Sea of Fog, by Caspar David Friedrich. A lone figure faces an uncertain landscape, not to conquer it, but to take stoke and orient within it. Agency in the fog promises no certain answers. It only demands that we ask the right questions.

In 2022, while writing my master’s thesis, I set out to define a privacy-preserving framework that could meet two demands at once: robust enough to protect user privacy across any digital surface, yet flexible enough to adapt to any user’s shifting, context-dependent boundaries.

I drew inspiration from information security, where we often refer to the CIA triad—Confidentiality, Integrity, and Availability. This model doesn’t prescribe procedures but defines an end-state: what secure looks like in terms of outcomes. If a system lacks any one of the three, it’s not secure. The triad gives practitioners an outcome to aim for and trusts their expertise and judgment to determine how to get there.

Meanwhile, I was struck by how clunky and brittle most privacy frameworks are by comparison. Unlike the outcomes-based clarity of the CIA triad in information security, privacy is dominated by prescriptive rulesets that seek to enumerate every protected surface or interaction in advance—tantamount to trying to predict the future!

This approach fails on two fronts (besides trying to prophesy privacy):

They’re inflexible. By narrowly defining what needs to be protected, they can only secure the surfaces and interactions they explicitly name…leaving everything else exposed.

They’re restrictive. Instead of defining the outcome and trusting privacy professionals to use their judgment to get there, they micromanage the process, thereby limiting innovative thinking, professional creativity, and adaptation.

So, what would an outcomes-based privacy-preserving framework look like?

I defined a new CIA: Confidentiality, Interoperability, and Agency

The details of that framework live in my thesis—but the third condition, agency, is where the idea broke open.

Once I added agency to the triad, the research quickly outgrew privacy. Mapping the technologies and interaction patterns that support user agency pushed me beyond the limited goals of digital privacy toward a clearer, more complete vision of the destination: digital self-determination.

Agentic tech, then, became the products, protocols, and platforms paving the road toward that destination.

I started using the phrase agentic tech as a signal—an invitation to Find the Others. Who else was working across privacy, identity, cryptography, Web3, wallets, social platforms, policy, funding—whatever the domain—but aligned with the same North Star: human flourishing alongside, not despite, our technologies?

At first, I assumed there must be a backroom somewhere—a place where all the decentralized actors working toward these goals were already coordinating, conversing, conspiring.

Then I thought, I want to be in that room.

And then I realized: the room didn’t exist.

So I built it.

I set up a “lighthouse” to call in the rest of the fleet and give our mutual project a shared language and a few common principles. This lighthouse was a Slack channel and monthly Zoom firesides with experts I’d tap to come talk to the group and cross-pollinate knowledge across this joyfully decentralized, loosely organized, and values-aligned ecosystem of agentic technicians.

I wasn’t building an audience; I was convening the builders—founders, engineers, designers, investors, policy thinkers—who were already independently working toward something better. Many of us didn’t know each other yet, but we shared the same love of tech, optimism for the future, instinct to course-correct the present.

The ecosystem didn’t exist yet. So I and others started forming it.

Back in 2022 (which isn’t very long ago at all!) the conversations were scattered. People working on self-sovereign identity weren’t necessarily in dialogue with people rethinking interface design, or consent architecture, or trust frameworks in AI systems. But the philosophical throughline was the same: how do we build systems that preserve the user’s ability to understand, choose, exit, and meaningfully participate?

Over time, as the “ships” started coming in and we converged into a bit of a navy. What started as a series of Zooms and Slack screeds slowly evolved into a movement and a tech category. Chad Fowler at BlueYard Capital teamed up with me to launch The Privacy Podcast to bring some of the content of our off-the-record Zoom firesides to a broader audience. Zoe Weinberg, then in the early stages of forming her fund, ex/ante, adopted the term as her investment thesis. And I started hosting in-person events in Austin, Tethics & Chill, with some (a)pretty (b)impressive (c)guest (d)speakers!

Today, we’re coordinating even more closely. A group chat is now active with over 100 builders, founders, and investors aligned around this mission.

Agentic tech is not a vertical. It’s a design philosophy.

It cuts across domains—digital identity, AI, security, UI/UX, governance—because the solution it names is structural. We’ve spent years optimizing for engagement, efficiency, and centralization. And in the process, we’ve lost the thread on user autonomy.

Agentic tech asks:

What does consent actually look like, and how do we strike a balance between speed and efficiency in digital interactions versus robust, verifiable, dynamically informed consent?

How do we build systems that respect exit, reversibility, and refusal?

Can our infrastructure (including funding models and venture capital) support the full range of human decision-making—not just what's trackable or profitable?

These aren’t abstract questions. They’re design constraints. They’re business risks. They’re policy challenges. And if we don’t address them head-on, the tools we’re shaping will continue to accidentally make us dumber, more credulous, more fragile, less resilient, less autonomous, and less capable of dealing with complexity, conflict, and cognitive load.

This Substack is where we’ll begin articulating what this ecosystem has quietly been working on. Some posts will map technical patterns. Others will surface emerging principles or new products—and the founders building them. Occasionally, my writers and I will zoom out to sketch what this all means for markets, governance, or trust in a digital world.

But at its core, this is about one thing: building technology that enhances human agency.

If you’re working on this, adjacent to it, or circling it without a name—welcome. There’s a growing cohort of us, and we’re just getting started.